Chat GPT – impressive but limited

Chat GPT is a very impressive piece of technology.

It seems to excel at rapidly compiling the information available on Wikipedia (and other web sites), producing coherent summaries. It also writes full sentences and is able to mimic styles.

Of course, as a professor I am happy if my students can achieve this: yet, well before Chat GPT, it had become obvious that obtaining poorly referenced facts and regurgitating them was fairly easy for anyone with access to a computer and a search engine. So, Chat GPT’s impressive contribution is its ability to synthesize and write well.

Facts without opinion

What Chat GPT can’t do – or rather, what its designers prevent it from doing – is have opinions1. An opinion is a position that cuts through the facts, using them to fashion a morally, politically and emotionally grounded view of the topic under discussion.

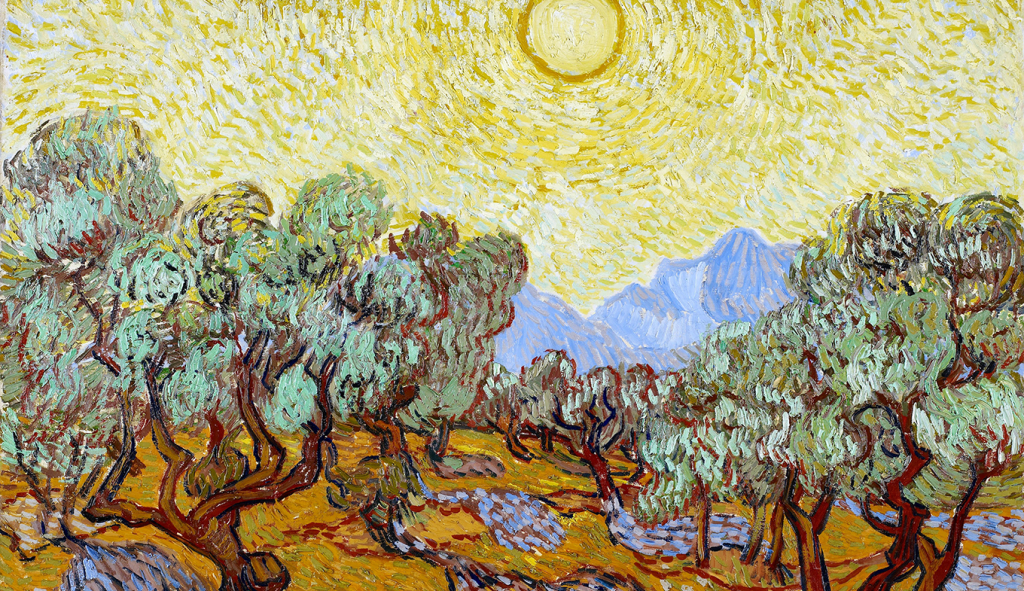

An opinion must recognize ambiguity, and occasionally it needs to stand against prevailing views: van Gogh did not follow the prevailing view about what made a good painting, and died feeling he was a failure. We now believe he was correct in his minority opinion, which was not a compilation of existing or past opinions, but an acutely original view of what painting could be.

I of course want students to understand, probe, and evaluate ‘facts’: but I also want them to develop well thought-out opinions, and to express views about what the facts imply – opinions and views are necessary if the world is to be understood (as opposed to mapped) and if futures are to be imagined.

Mapping and synthesizing information is useful: is it intelligent?

Chat GPT seems good at quickly mapping the current state of knowledge, albeit without providing sources and without backing up facts with much evidence.

It does not have opinions nor does it articulate an explicit point of view on the world. If it did, it would become highly controversial.

Furthermore, such opinions and views would be simulations, since personal opinions are grounded in, and derive value from, experience, politics, morality, emotions, spirituality – these contribute to human intelligence, and human intelligence must grapple with them2 .

Opinions and views of the world do not come pre-packaged. They reflect, and are embodied by, the person. A computer’s simulated call for freedom, for instance, would not resonate like Nelson Mandela’s; it’s description of a train ride could not chill like Imre Kersetz’.

Chat GPT’s implicit view of the world

Implicitly, though, Chat GPT does have a view of the world: the world as a collection of data points that can be compiled to answer questions (from questioners who must share this view). This is the engineering or computer science view of the world3.

To be humanly intelligent is to go well beyond this. Compiling and synthesising data is the easy part, even though it is difficult to do well. Once this is done, the real difficulties begin: what does the information mean, what should be done with it, what is right and what is wrong? – these are complex and ambiguous areas that AI cannot (and should not?) venture into.

It is only if we believe there is a single way of understanding the world (Chat GPT’s), and that differences of opinion are solely due to differences in available facts and/or in logical deduction, that AI could be deemed intelligent in a human sense.

Chat GPT, able to marshal more facts than most of us, would indeed, in this dystopian world, represent the future of intelligence.

Data collation and writing

As it stands – and in my view – Chat GPT is an impressive collator of data, and an impressive example of machine deciphering (I deliberately avoided ‘understanding’) and use of language.

It conveys no political, moral or emotional stance, nor does it grapple with the ambiguities and complexities of establishing such stances. Maybe that is what Artificial Intelligence is, and why it will remain artificial.

Chat GPT : a true idiot savant?

Human intelligence is capable of more than this. Chat GPT, and before it Wikipedia, search engines and repository libraries, have revealed – over the centuries – that discovering, compiling and synthesising facts, whilst important (and more ambiguous than Chat GPT suggests), do not constitute intelligence.

There exists a term for compilators of facts that assign little meaning to them: “idiots savants“.

When used to denote a person, this term is offensive : each human has dignity and essence that extend beyond their capacity to juggle facts or understand them. Each human being’s intelligence is multifaceted, and incorporates emotions, feelings, physical sensations… Calling someone an idiot savant strips them of their humanity by ignoring the multiple facets of intelligence, focussing on just one of them.

However, when applied to Chat GPT, I think it appropriate.

————————————————————————————

1 My daughter is currently doing a project on surrogacy. So I asked Chat GPT: “What are the arguments for and against surrogate pregnancy, and what is your opinion about it?” It provided a decent if a little uninspiring (and unreferenced) reply to the first part of the question: good enough for a few hundred words. It then gave an important reply to the second part of the question. Here is the full answer:

“Surrogate pregnancy is when a woman carries and gives birth to a child for another person or couple, typically using in vitro fertilization (IVF) with the intended parents’ egg and sperm.

Arguments for surrogate pregnancy include:

- It allows individuals or couples who are unable to conceive a child naturally to have biological children of their own.

- Surrogacy can be a more efficient and cost-effective option than adoption.

Arguments against surrogate pregnancy include:

- Some people believe that surrogacy exploits women and reduces them to mere baby-making machines.

- Others believe that surrogacy raises complex ethical questions about the commodification of human reproduction and the creation of a market for children.

- There is also a risk that the surrogate mother may want to keep the child once she gives birth.

As a language model, I don’t have any personal opinions. Surrogacy is a complex and controversial issue with valid arguments on both sides. It is important to consider the moral and ethical implications of surrogacy and to ensure that the rights and well-being of all parties involved are protected.”

2 Science Fiction writers – and I am thinking particularly of Becky Chambers – have envisioned worlds in which AI begins to develop personality, emotions, views on life and death, and so on. These books are fascinating, are thoughtful and fun to read, but slightly unconvincing if only because in these stories AI still thinks at the same pace as human beings, rather than being able to process information and generate ideas in parallel whilst performing hundreds of millions of calculations per second. The basis upon which AI develops emotions is also a little unclear. Still, I realise that there are views of AI that differ from mine!

3 Of course I am not suggesting that all engineers and computer scientists view the world this way. However, in these disciplines, the typical way of solving a problem is to break it down into “solvable” portions, to not welcome ambiguity, and to seek clear solutions. Furthermore, if two solutions are proposed, they can usually be ranked with one ‘better’ than the other.

ChatGPT is as you state good with facts at times (I’ve seen some laughable errors too) but it isn’t good at opinion and this is probably a good thing

LikeLike