Readers of my recent blogs will have worked out (!) that I am concerned about how reliance on AI prevents students learning basic skills, depletes the number of people capable of supervising AI , and robs students of voice and authorship.

Of course, I acknowledge that students reading these posts may roll their eyes! Yet another old, hectoring and out-of-touch professor.

There is no denying my age, and I can’t vouch for my tone or touchedness: but I do understand why the use of AI may feel unavoidable to students, including those who would prefer avoiding it.

I set out below a few reasons that, to me, make it rational and logical to use AI in the context of university studies. I then make a short case for the importance of learning basic skills and for universities to begin thinking seriously about how skills can be taught (and assessed) in the era of AI.

To be fair, universities are thinking about this (a little): but they are also feeding the problem.

Universities are setting the example

First, universities themselves set a bad example: they have increasingly outsourced their services to AI assistants, complex computerised management systems, and impersonal FAQs. They are also investing in AI, promoting it, and presenting it as inevitable.

Books such as Teaching with AI (Bowen & Watson, 2024), which McGill refers its professors to, posits AI as inevitable and describes a world in which professors use AI to develop curricula, propose examination questions, and assist in marking.

In such a context, if I were a student, I would look around, listen, and learn from the best: if AI is inevitable and if universities are relying on it for administration and teaching, why should I not rely on it myself for producing as many essays and answers as I can?

Why not maximize one’s chance of obtaining university-sanctioned credentials?

Second, it is unfortunate but true that universities deliver credentials, passports to jobs.

Of course, AI may currently be devaluing these credentials, whilst concomitantly augmenting the value of manual and applied skills; but assuming degrees remain of some value, their value lies in signaling social status and capacity to play the game.

If today’s game is played with AI, why reduce one’s chances of success by not using this useful tool?

Universities value competition and ‘excellence’, not learning

Universities like to flaunt excellence, achievement and – if possible – large grants and gifts from donors.

Thus, the values projected by universities are competition, winning and financial gain. In such a context – which merely reflects the wider values of late capitalism – students naturally look for the best way to compete, win and, in due course, make money.

AI seems to facilitate this by providing an edge over students who do not use it : if AI provides an edge, even students reluctant to use AI may feel forced into it.

Universities should refocus on teaching and value understanding

Given these compelling examples, pressures and enticements, it is understandable that students turn towards AI.

Yet it is not in their long-term interests. Even though using AI may become an important skill, one of the greatest skills students will require is to understand the issues that AI is addressing, assess the ideas it proposes, and make choices that balance the ethics, politics, values and intangible complexities of real situations.

Just as calculators greatly facilitate calculation, but do not obviate the need to understand mathematics, the meaning of numbers, and results, so AI will facilitate thinking problems through and proposing solutions without obviating the need to understand, make choices, and decide.

So universities need to (re)focus on teaching how to make choices and decisions (whether this is in medicine, astro-physics, planning or communications), which starts with learning about the nature of the problems being tackled, understanding how they have been thought about in the past, and thinking critically about them.

The importance of basic non-AI skills: some examples

This requires the teaching (and learning) of basic skills – such as data cleaning, writing, measuring, synthesizing – all of which can be done faster by AI, but all of which develop important skills when performed by a student.

Data cleaning, for example, teaches one about the ambiguity of data, about the myriad small adjustments and decisions made to apparently ‘clean’ data, about the approximation of measurement, about the biases that cleaning can introduce, and so on.

Writing forces one to think in an iterative fashion. Thoughts don’t just appear: they start off rough and jumbled. But the efforts made to create a first draft then allow our subconscious to work things out, so that, when we re-write, our ideas are clearer and more sophisticated. As drafts are shared with supervisors and colleagues, so ideas improve and crystalize.

AI, which condenses all of this into a few prompts, robs us of the possibility of truly thinking.

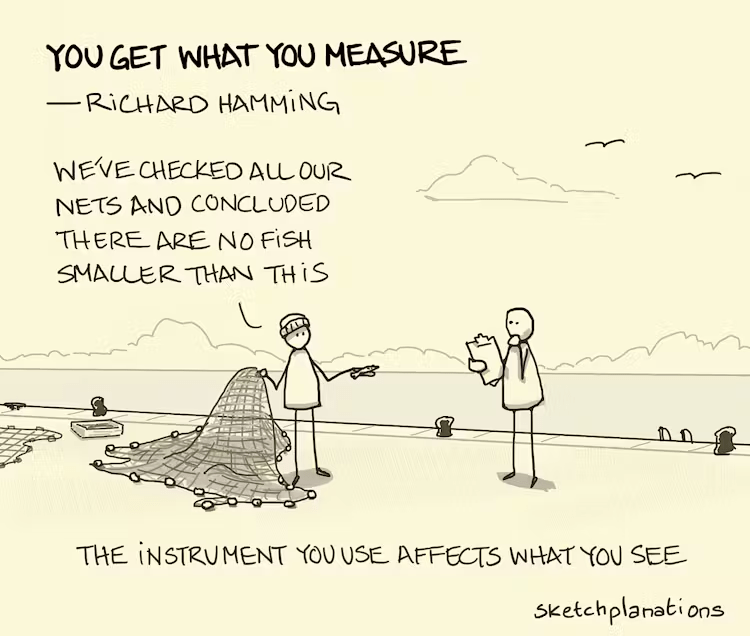

Thinking about how to measure something – say something as simple as income – forces us to consider the complexities of the concept: before or after tax? which taxes? earned income? government transfers? investment? annual, lifetime, hourly? individual, household, family, across all dependents? income hidden in corporations? capital gains?

Even once these questions have been addressed, actually obtaining the numbers is another matter entirely. AI can cobble together estimates, but to truly conceptualize and measure income is an exercise in financial, social, tax and equity thinking.

Synthesizing texts, like data cleaning, calls for many decisions to be taken, biases assessed, priorities defined, as well as clarity. If performed within the context of a specific study or a theory, some minor details within a set of documents may take on importance.

AI, very good at general syntheses, may miss details that catch a reader’s attention: not only does a reader have reasons for reading (and then synthesizing) ideas, these reasons may evolve as familiarity with the material increases.

The challenge: valuing learning rather than outcomes and credentials

The challenge is to persuade students that, even though AI now seems to be part of life, learning the skills that AI seems to accelerate is critically important for properly using and supervising this technology.

BUT

If universities continue to assess students as they currently do, privileging outcome over process, results over learning, then there is an inbuilt incentive for students to use AI instead of undertaking the arduous tasks of studying and developing skills.

The onus, therefore, is on teachers and universities to find ways of teaching and assessing student that do not simply focus on outcomes.

Until we get there (I am writing as a professor) many students will, understandably and pragmatically, be attracted to AI short-cuts.