AI in universities: short-term gains

AI is inevitable in the sense that it has been forced upon us by tech oligarchs, and that it provides practical tools for short-circuiting lengthy processes such as writing, synthesis, programming, cleaning data, and so on.

In the short-term, and from a purely pragmatic perspective, there are many reasons to embrace AI (setting aside, for the sake of this post, its massive energy consumption, its surveillance function, its biases and hallucinations, and its ultimately commercial function, i.e. its function to generate profits for the owners of AI systems).

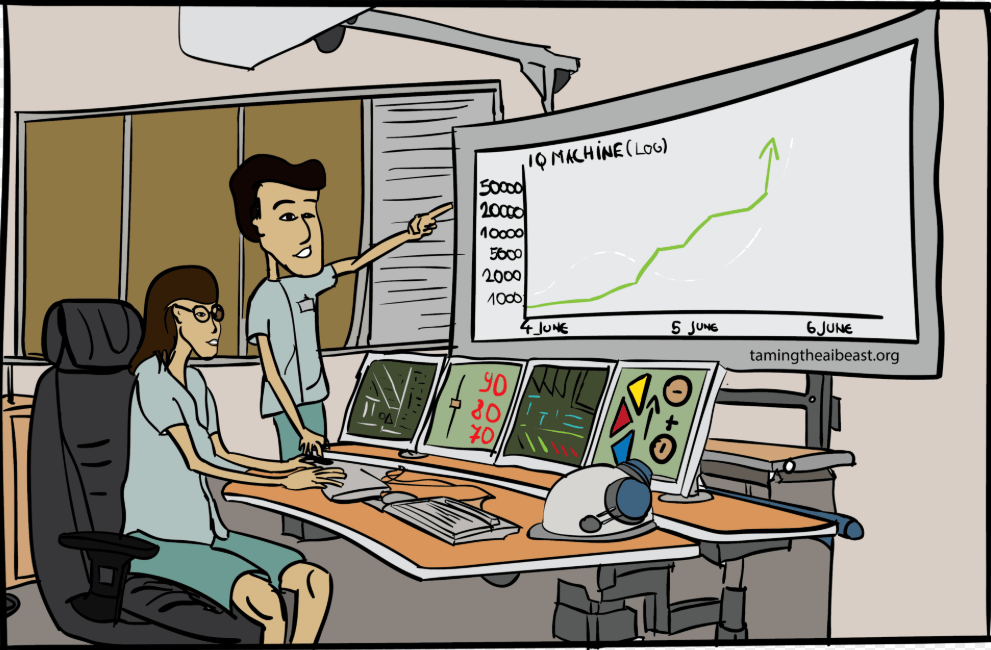

In the longer term though, there is a risk that AI will be harmful to the very functions that it sets out to facilitate.

AI requires experienced oversight

Until AI corporations accept responsibility and liability for AI outputs (which they don’t – thoughtful people should ask themselves why) managers and researchers are responsible for what AI produces for them.

Yet AI cannot be the final arbiter of its own output. We know it can hallucinate, we know it has no real understanding of the problems it analyses, and we should be aware of the problem of feedback loops and be suspicious of black-boxes.

Therefore, any responsible manager overseeing analysis produced by AI (and I include researchers whose assistants and students use AI) will want all output verified.

Even if AI has economised a large amount of time by crunching numbers, formatting reports and programming software, oversight is necessary not only to avoid mistakes, but also for liability issues: the manager is responsible for this output, AI is just a tool.

How can one oversee AI outputs if one does not understand what it is doing?

But how can one oversee AI outputs? It is only by having experienced staff, or by being experienced oneself, that an analysis can be examined and its quality assessed. Typically, one must have familiarity with underlying analytical techniques, and be able to verify each step of the analysis, each reference, each argument and each inference.

Even for simple machines, such as calculators, knowledge of arithmetic is an important safeguard: being able to quickly estimate a result’s order of magnitude is crucial, even when the precise result can only be found using a machine. Why is mental arithmetic so important? To catch basic typos, unit errors and the like.

Just as mental arithmetic is important when working with a calculator, so experience with tasks undertaken by AI is important for those who work with it.

Can students gain experience if they subcontract their learning to AI?

How can one obtain experience? By learning how to perform the tasks oneself – and that means learning how to perform them without AI.

It means being mentored and making the many mistakes that – taken together – add up to learning.

These mistakes, if carefully corrected and understood, lead a student to develop knowledge and intuition, to understand how analysis, synthesis, data cleaning, or report-writing function. These knowledge and skills then equip them to oversee AI when it performs these tasks.

Teaching and learning are processes that require time: AI provides speedy outcomes

Particularly in a university setting, where technicians, analysts and managers are trained, using AI to (apparently) increase learning ‘productivity’ is a false economy: it is tantamount to considering learning as an output rather than a process that takes time.

If all that mattered were the speed at which students produce essays, theses, analysis and programs, then AI – for all its problems – would be great.

But that is not what universities are for: their function, in principle at least, is to train students, teach them the processes that underpin writing, analysis and programming.

In due course, these students will become employees, entrepreneurs or researchers, and they will oversee the output of AI. But if they have not spent time mastering tasks and developing skills, if they are unable to fully understand and verify AI’s outputs, then they will be unable to function with AI.

Rather than overseeing a powerful (but potentially biased, hallucinating, and opaque) tool, they will be subjected to it – essentially useless (except as useful heads that can roll when AI makes mistakes).

Students who have not learned cannot oversee AI

As AI is incorporated into university curricula, and as AI undertakes tasks (summaries, synthesis, writing, programming…), students are deprived of opportunities to learn and develop their own critical capacities; they will not know how to oversee or evaluate the processes performed by AI.

Universities should actively shy away from (or strictly frame) AI use, at least when it comes to learning, teaching and training. Paradoxically, this is necessary for students to understand the processes AI undertakes, and be able assess its outputs.

It will allow them to work with AI, to be critical of AI, and to possibly understand what AI produces.

But…

The proliferation of AI tools in science risks introducing a phase of scientific enquiry in which we produce more but understand less.

Messerri & Crockett, 2024, Nature, 627

… this is not just a risk for scientific enquiry.