Thinking about new technology

When powerful oligopolistic corporations force a technology upon us, telling us that there is no alternative, shoe-horning it into search engines, answering services, operating systems, etc…, it is sensible to take a step back and think about it.

It is sensible to wonder why these oligopolies are doing this, who will profit, where the energy will come from, and what the alternatives are.

This is only possible, however, if one can think.

How does one learn to think?

One learns how to think by grappling with complex issues, by formulating one’s thoughts in writing (or maybe in some other medium, such as paint, clay or metal), and then by going back over them, altering and improving them.

If one does this many times with many different types of problem, one slowly develops an ability to think and, possibly, to create new thoughts.

Crucially, one learns how to think from mistakes, from feedback, and from intuitions that rest upon the millions of small thought processes undertaken as one interacts with facts, fiction, ideas, people and the world around us.

Each person learns slightly differently from their unique experiences, comes up with slightly different ideas, allowing knowledge to evolve incrementally as a social process.

What if technology unburdens us from thought?

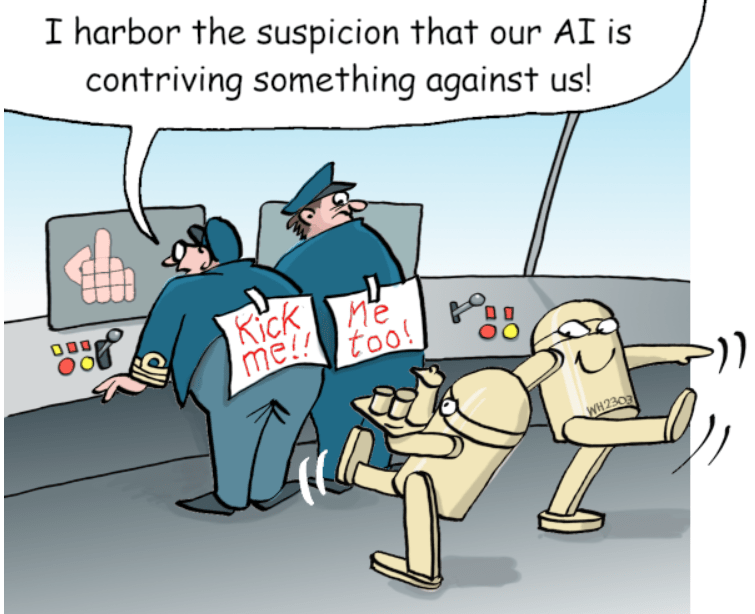

Now, imagine that the technology being forced upon us is specifically designed to save us from grappling with issues, from writing down and revising our ideas.

Imagine that this technology unburdens us of the effort necessary to learn how to create new thoughts.

Imagine that it flattens the billions of unique learning experiences to five or six algorithmic behemoths trained on data with unknown biases and errors.

Imagine, finally, that these five or six algorithms achieve these goals by scanning old thoughts and sentence structures (increasingly those produced by the algorithms themselves), reworking them, and allowing us to present them as our own.

This technology will have, apparently, ‘solved’ the problem of thinking …

If AI takes over thought, how can we think about it?

As AI is forced upon us by tech corporations, and as it is willingly (and naively) incorporated within institutions of ‘learning’ (it is inevitable, we are told), it will no longer be necessary for students to learn how to think, for professors to teach students how to think, or – for those who have wasted their time learning to think – to continue exercising thought.

Once this is achieved, who will have benefited?

The billions of credentialed idiots, now free from the burden of thinking (and, indeed, incapable of it)?

Or the few billionaires who own the rights to AI (and, so they have declared, to all knowledge it ingurgitates).